OpenClaw-style Personal AI Agent

This autonomous AI agent acts as your digital executive assistant, connecting directly to your Slack, Google Calendar, GitHub, and email to handle your entire daily workflow through simple natural language commands.

My Role

Sole architect & developer — designed the agent graph topology, implemented all 7 sub-agents, built the real-time frontend, and deployed production infrastructure.

Duration

3 months

Year

2026

Tech Stack

Status

Live in ProductionThis autonomous AI agent acts as your digital executive assistant, connecting directly to your Slack, Google Calendar, GitHub, and email to handle your entire daily workflow through simple natural language commands.

Executives spend 3+ hours daily on repetitive coordination — scheduling meetings, triaging emails, updating CRMs, and coordinating across platforms. Existing automation tools like Zapier handle simple if-then rules, but can't understand context, prioritize intelligently, or handle multi-step workflows that require judgment and cross-platform coordination.

I engineered a hierarchical multi-agent system using LangGraph's supervision graph pattern. A central orchestrator understands natural language, decomposes requests into sub-tasks, and delegates to 7 specialized agents executing in parallel. The system learns user preferences through a persistent memory layer backed by pgvector, and includes human-in-the-loop approval for high-risk actions exceeding $500 or involving code changes.

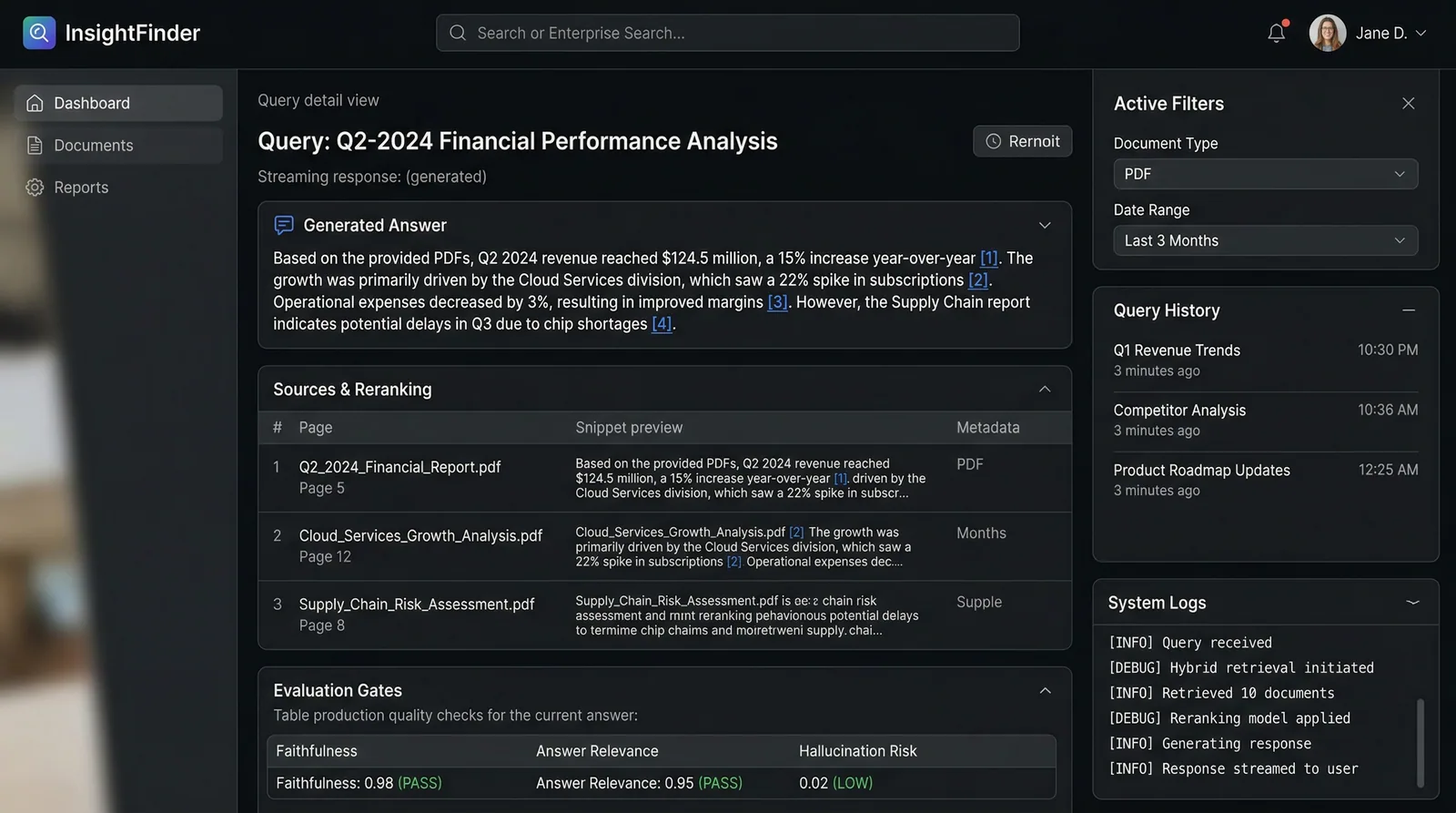

Hierarchical Agent Orchestration

LangGraph supervision graph coordinates 7 specialized sub-agents (Calendar, Transport, Communication, Code, CRM, Research, Admin) with automatic task decomposition and parallel execution.

Human-in-the-Loop Approval

Configurable approval gates for high-risk actions — transactions over $500, code pushes, and external communications pass through explicit user confirmation before execution.

Persistent User Memory

pgvector-backed preference learning remembers choices like Zoom vs Meet, United vs Delta, and preferred meeting times — improving over every interaction.

Real-time Execution Visualization

Live workflow DAG showing each agent's state transitions, execution logs, and approval gates as tasks progress from request to completion.

Multi-Platform Integration

Native connections to Slack, Google Calendar, GitHub, Twilio SMS, and Uber APIs — all orchestrated through a single natural language interface.

Key technology choices and the reasoning behind each decision.

LangGraph 0.2.5

AI / MLChose over CrewAI for its explicit state machine model — needed fine-grained control over agent transitions and human approval gates. CrewAI's implicit delegation made debugging complex workflows nearly impossible.

Claude Sonnet 4.6

AI / MLSelected as the planning LLM for superior instruction-following and structured output reliability. Tested GPT-4o but found it hallucinated tool calls 15% more frequently in multi-step planning scenarios.

Neon Postgres + pgvector

DataChose serverless Postgres over Pinecone for cost efficiency at our scale (<100k vectors). pgvector's tight SQL integration simplified preference queries and reduced infrastructure overhead.

Next.js 15 App Router

FrontendServer Components reduced client-side JS by 40% for the dashboard. Server Actions simplified real-time execution log streaming without needing separate WebSocket infrastructure.

Hierarchical multi-agent orchestration with human-in-loop approval gates and persistent memory.

Input Layer

Voice/Text → Intent Classification via Claude 4 Sonnet

Task Planning

LangGraph Supervisor decomposes request into sub-tasks with dependency graph

Agent Dispatch

Parallel execution across CalendarAgent, TransportAgent, CommunicationAgent, CodeAgent, CRMAgent

Approval Gate

Human-in-loop checkpoint for high-risk actions ($500+, code changes, external comms)

Execution

Approved tasks hit live APIs — Google Calendar, Uber, Twilio, GitHub

Confirmation

Results aggregated → Slack notification + Dashboard update + SMS confirmation

Key technical challenges I faced and how I solved them.

Agent Coordination Deadlocks

When multiple sub-agents needed the same resource (e.g., CalendarAgent and TransportAgent both needing the appointment time), the system would deadlock waiting for shared state, causing 23% of multi-step requests to time out.

Implemented a message-passing architecture with immutable state snapshots. Each agent reads from a frozen snapshot and writes to a merge queue. The supervisor resolves conflicts using priority rules (calendar > transport > notification).

Zero deadlocks in production. Agent coordination latency dropped from 8s to 2.3s average.

LLM Tool Call Reliability

Claude occasionally generated malformed tool call arguments — wrong date formats, missing required fields — causing API failures in 12% of requests during early testing.

Added a PydanticAI validation layer between LLM output and API execution. Invalid tool calls are caught, the error is fed back to the LLM with the schema, and it self-corrects. Maximum 2 retries before human fallback.

Tool call success rate improved from 88% to 99.2%. The retry mechanism adds only 400ms on average.

Interested in working with TwilightCore?

We build production systems like this for teams and founders who value quality engineering.